Ford has done it again, folks. They’ve given us yet another feature no one wants. A newly surfaced patent suggests Ford is working on an infotainment system that can read your lips and analyze your facial expressions when voice commands fail. While the idea behind it makes perfect sense, it makes you wonder whether automakers are solving real problems or creating new ones.

A Backup for Noisy Cabins

Ford

Voice control has become a staple in modern infotainment systems, even if many drivers rarely use it. Ford’s patent attempts to address one of its biggest weaknesses: background noise. In situations like driving a Mustang with the roof down or a Bronco with removable doors, microphones can struggle to pick up commands clearly. The proposed cloud-based system uses AI, interior cameras, and sensors to track lip movements and facial expressions when the “ambient noise level inside the vehicle is greater than the threshold.”

So, while many buyers are asking Ford to make practical, affordable sedans once again, the American carmaker would rather spend its time on features drivers barely use.

How “Enhanced Mode” Works

USPTO

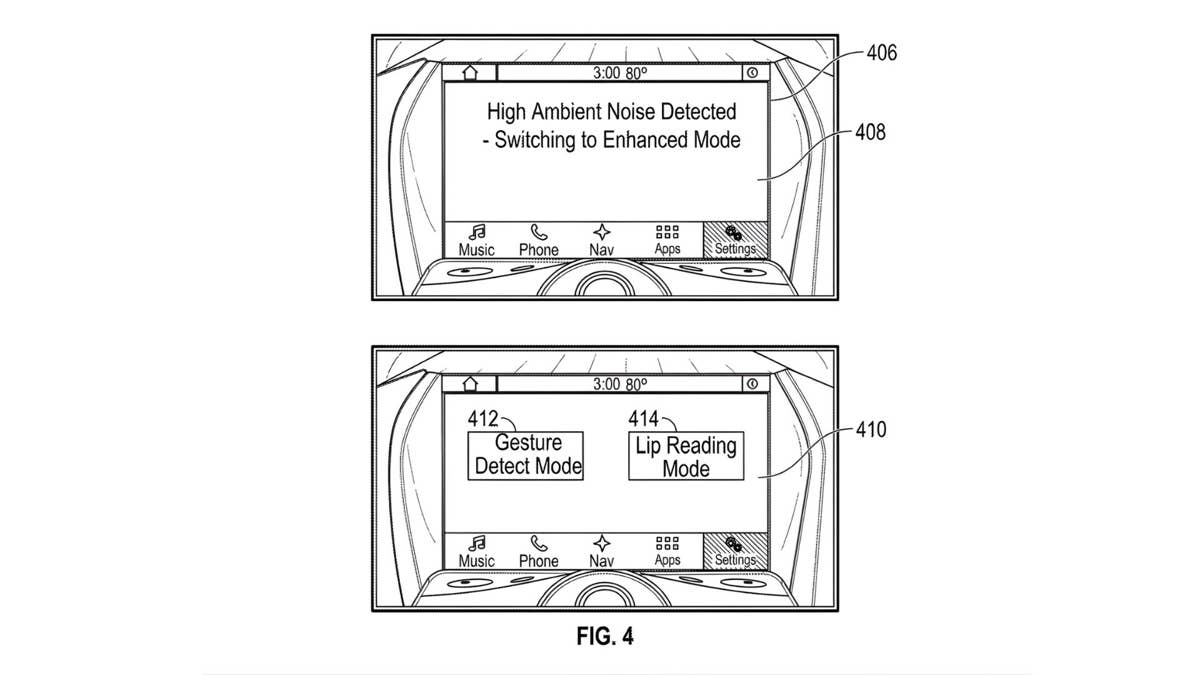

According to the patent, the AI-assisted software won’t activate on its own. Instead, once the vehicle detects a noisy cabin, the infotainment screen prompts the driver to enable “Enhanced Mode.” From there, users can choose between “Lip Reading Mode” or “Gesture Detect Mode.” That means you can whisper, nod your head, frown, and even roll your eyes – Enhanced Mode will interpret it and act accordingly. Clever? Yes. Needed? Absolutely not.

The system relies on machine learning models trained to interpret these movements, similar to how other automakers like Toyota and BMW are expanding voice tech. With so many brands leaning into AI nowadays, experts warn that the surge in demand for advanced chips may lead to an automotive chip shortage, once again.

Innovation vs. Overengineering

Ford

As forward-thinking as it sounds, the idea brings some obvious concerns. Privacy is at the top of the list. A system that actively monitors your face, and perhaps even your entire cabin, will not sit well with every driver. And since it’s a cloud-based system, it will depend on signal, meaning it will be rendered useless when traveling on empty country roads or through tunnels. Not to mention the fact that Ford has had to recently recall more than 250,000 SUVs over software issues, making us skeptical about Ford’s AI-assisted software reliability.

But the main question many drivers will have is: Why? Why do we have to evolve a feature that basically no one uses? Many drivers still prefer simple, physical controls that work instantly without interpretation. While voice commands can be useful to perform mundane tasks like opening the sunroof or turning on the A/C, physical buttons do the same job, but faster and simpler. And the last thing drivers want is to feel paranoid in their own cars